S O F T W A R E

S O F T W A R E

Here you'll find utilities I wrote.

I have included here some basic stuff I developed while working or while just having fun. Don't expect to get things well developed. They'll work, but probably not in all situations. Some have specific ends. I hope it could help someone. If you find something useful, please write me. It will be great to know if some code here is usable ;-) Also, feel free to write down your suggestions and stuff you'd like to.

If you think you can use one of the things here, go ahead. Just remember to

keep my name in it. Don't use it in commercial stuff except with my (kindly)

permission and send me some nice words when reporting bugs. I'm not responsible

if you screw up things with something I wrote. By the way, it's always a good

idea to read the GNU Manifesto, if you haven't

already done so.

It plots synthetic data with a Morlet Wavelet superimposed to it. Then lets you control the Morlet center and width in order to visually compare with the data. Useful for learning scale and center of wavelets.

morlet_interativa.pyReads any ASCII file and plot its contents, assuming a column with (x,y) data points. For everyday use at the console.

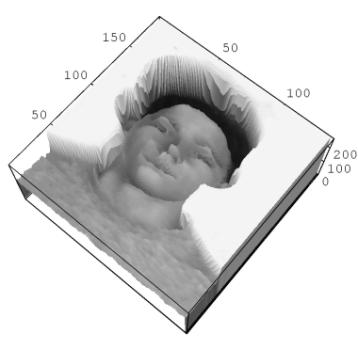

plotfile.pyCreates a data cube (x,y,lambda) with a simple gaussian emission line of intensity I0, center z0 and width s, all chosen randomly. After FSR length in z, the spectra repeats itself. Usefull for testing Fabry-Perot data. Part of Bytterfly

random-gauss-cube.pyThe Brazilian Tunable Filter Imager (BTFI) software system. A suite of software routines to reduce the Fabry-Perot data from BTFI. Follow to Bytterfly page.

SOAR Telescope Integral Field Unit data simulator. A complete data simulator for the IFU spectrograph. Simulates all aspects of data output for testing and planning purposes. Please refer to Coala's page for full information.

coala

Integrates an elliptical galaxy luminosity profile (Sersic's ou de Vaucouleurs') to obtain the total extrapolated galaxy luminosity. Astronomers interest only.

lumin_04.c lumin.hGalaxy Dynamics -- elldisc simulates the projected mass of and arbitrary ellipsoid (a model of an elliptical galaxy) in an arbitrary direction, so projected and intrinsic properties can be compared. Remember: astronomers only see the projected quantities. Full documentation inside.

Elldisc-0.93.tgz

Calculates semi-axes lengths, inclination angle and ellipticity of an ellipse. Only for those acquainted with elliptical galaxies, maybe.

ellipsis.cIn early days (:-) we used to calculate a star magnitudes using counts from a photomultiplier in telescope's focus. This little program does the calculations. You are not interested in it, no?

fotomultiplier-magnitude.cSearches a NED photometry batch job result and separates data by galaxy and for type. Astronomers use only (or Perl Regexp students)...

NED-phot.pl NED-ponfot.datIt reads the IRAF/Nfit1d output and rearranges the output values. If you don't know what IRAF is, you not likely to need this, although you could learn some Perl in it.

classif.plOrganizes the data from Prugniel A&AS 1998, 128, 299-308. Astronomers...

prugniel-slicer.plCalculates a solar atmosphere model, based on Eva Novotny, Stellar Atmospheres.

solar-atmos.cCreates a FITS image with a 3D sin() pattern in it. It was created to test other software. Uses cfitio library.

sinmax.c sinmax.MakefileMakes an image transformation from cartesian to polar coordinates, i.e., from (x,y) to (rho,phi). Useful for making image Fourier transforms.

transpolar.c

Converts a text data file on a FITS image file. Maybe useful mostly for astronomers.

ascii2fits.c ascii2fits.MakefileSimple program to read the graylevels from a BMP image file.

bmp.c

An evolution from led-menus. This one controls a stepper motor through the parallel port. Now in Linux. The stepper motor it is not included in the distribution.

motor-passo.c motor-passo.h motor-passo.MakefileIt drives leds connected to the parallel port on and off on animated sequences. You can make you computer control your Christmas tree in a very awkward way. Made to test parallel port control under DOS :(

led-menus.cReads the serial port on Linux with both canonical and non-canonical methods. It was written to interface a multimeter. Based on Serial Programming HOWTO

serial_read_canon.c serial_read_not_canon.c serial_read.MakefileA database driven webpage designed to manage houses and apartments sells and rents. Permits including images, descriptions, etc... Written in Php and MySQL. Complete.

FFerrari-ImobilDB.tgzA simple shell address book, with names, addresses, etc. Simple and useful. It stores the data in a simple text file. Exports in HTML. Also useful to learn some Perl tricks with FORMAT for example. Includes a X version with Perl/Tk.

agenda_0.21.pl xagenda.plA set of shell scripts to make system backup with a CDRW drive. Put in your crontab and sleep well :-)

FFerrari-backup-cdrw.tgzCalculates the average car fuel consumption. More didactic than anything else.

consumo-carro.c consumo-carro.pdfTravels in the current directory tree replacing all spaces and strange characters form filenames by underscore ('_').

espacios.plThis script creates a skeleton of a LaTeX file with all needed to start typing: documentclass, usepackages, document, title, author...

texplateThe same as TeXplate but for C, instead.

texplateUtility script to change the network configuration on a Windowze system. It uses netsh utility that comes with Win 2000/XP to change the network config with a .bat file. Before using it, edit the end of the files config-casa.txt ('casa' means home) and config-trabalho.txt ('trabalho' means work), where IP address are defined. It also comes with an auto.bat script to activate the interface via DHCP.

swap-net-win.zipIt converts a set of JPG images in the current directory to lower resolutions. Damn simple, but useful

convertejpg.cshIt reads the acpi command line output and warns the user in the shell. Useful if defined as 'periodic' in the TCSH shell.

bateria.plGenerates the page you are reading from a description file.

publicad.pl descricao.txtCompares the math.h exp() function with one of its own, calculated by series expansion. Interesting to those who believe in all the numeric built in functions blindly.

exponential.cExample of dinamic matrix allocation in C. It is here so I can consult it now and then.

malloc_matriz2d.cSilly progress indication in the shell using |/-\ characters.

mesma_linha.cA simple C++ stack implementation example. (I was learning C++ :-)

pilha.cpp| Rio Grande, RS - Brasil | 03 Jun 2026, 17h43m | your IP: 216.73.216.215 |